The Architecture of Conversation Theory

©

Copyright 1989, 2002 Paul Pangaro. All Rights Reserved.

1. Technique

The

modeling technique described here is derived from Pask's Conversation Theory

(CT), especially as presented in "An Approach to Machine Intelligence"

(reprinted in Soft Architecture Machines, edited by Negroponte, MIT Press, 1975). There, Pask presents a

formalism for describing the architecture of interactions or conversations, no

matter where they may arise or among what types of entities. Because that

formalism emerged from Conversation Theory, and indeed is one of its

foundations, I refer to it in this paper as the CT formalism, and to the

modeling that emerges from it, as detailed below, as CT modeling. (This can be

misleading, because the models described here are only half the story of CT;

the other half, so to speak, is that of entailment meshes, described elsewhere

(Pask’s Conversation Theory, Applications in Education and Epistemology, Amsterdam and New York:

Elsevier Publishing Co., 1976., that capture the meaning that is conveyed in the

conversations whose architectural structure is expressed in the models

described here.)

The

explanation that follows is couched in the example of modeling an organization

or company because it was first written during a consulting project with Du

Pont. One of the goals of that project, undertaken at the behest of Dr Michael

C. Geoghegan, a research fellow at Du Pont at the time, was to understand the

evolution of the social, political, bureaucratic, and technical conversations

that were the

Du Pont company. We began a modeling process, based on the formalism described

below, and produced a series of snapshots of Du Pont, each at a different point

in the structure of conversations. This gave us insight as to how the company

had become what it had become, and also how interventions in the conversations

current in the late 1980s might bring about desired change.

Though

the modeling technique is here described with specific examples from that

project, the power and beauty of Pask's work is that it can be applied to any observable interaction

between participants in a conversation. This can apply in two ways:

- to the domain being

modeled, whether organizational machinations alluded to above, to dialogs

about learning, or interpersonal relationships in or out of crisis, or

indeed any dance of relationship among language-based beings;

- to where the conversation

is embodied, viz, to conversations among individual humans, or among

groups of social systems (Republicans and Democrats, zealots and

agnostics), or even within a single human, as when our "inner

dialog" across multiple and often conflicting perspectives leads to

new insights and evolution in our belief systems.

In addition to that broad scope of potential application,

this modeling technique is useful because it expresses the interactions among

participants in any dialog in a manner that allows deep scrutiny. Because it

arises from a cybernetic sensibility, the models produced will encompass the

goals, actions, information flows, feedback, and adjustments that any

cybernetic model will display. The stunning power of the technique, however,

lies in the insights that emerge about the "architecture" of the

conversation. The diagrams capture the hierarchy of goals and actions (the

objective interactions) as well as peer-to-peer language exchanges (the

subjective interactions) in the same frame. As a result, complex and otherwise

vague concepts, including intelligence, agreement, and misunderstanding, become

specific and indeed measurable.

The

goal of this text is to make Pask's formalism more accessible by drawing out

the components via examples and expressing the dynamics in different terms than

Pask's original pieces. Scope is restricted to walking through the elements of

the technique and how it might be used to examine the degree of consistency in

systems, and what might constitute their being "intelligent." However

it must be acknowledged that this text makes only a small step in explaining

the workings and power of CT modeling.

2. Benefits

For

the remainder of the text, examples will be given for the case of modeling

organizations, but the reader is invited to always realize that, as per the

above, any conversation can be modeled using CT. Hence wherever the word

"organization" appears below, the reader may substitute

"system", "family", "conversation", or

"person."

CT

modeling is useful because it:

- provides a simple and

informative diagram of the observed levels and relations within an

organization

- distinguishes between

interactions under the control of the organization (i.e., internal ones,

performed by management fiat) and those not under direct control (i.e.,

external ones, negotiated with the

environment)

- produces an image of the

implied as well as the expressed goals of an organization

- gives a strict definition

of "intelligent behavior", and hence allows the evaluation of

any given organization against that definition

- exposes specific

organizational pathologies, such as

- mismatch between the

"managerial structure" and the working dynamic that actually

controls the organization;

- lack of action or lack

of feedback, whether within levels of an organization, or between the

organization and its environment

- allows for close

examination of the form of that flow of control and feedback of results

- points to where changes

must be made to maintain intelligent behavior and viability due to the

evolving environment

- predicts the potentially

unsuccessful communication with other systems/ individuals/ bodies corporate

- shows how the message may

be internally inconsistent and hence misunderstood, or intentionally

deceiving

- shows how the message may

be in conflict with the receiver's purposes.

The

components of this general modeling technique are explained in the next

section.

3. Model Diagramming

First

a general overview of the two classes of interaction is given, and then details

of each are diagrammed and described.

3.1. Classes

of Interaction

Interactions

are modeled in two classes, each detailed in subsections following:

1.

Interactions

that involve control of one process by another. Some examples are:

a.

when

the organization of a corporation, in its "upper management",

controls the procedures carried out by manufacturing

b.

where

the corporation's control of a marketplace attempts to directly determine price

or production

c.

when

management controls the workers by hiring and firing at will.

These

interactions are drawn vertically, and thereby represent the strict hierarchy

of these relationships. In the diagrams, specific flows of control and feedback

up and down the hierarchy are shown. Quite often these interactions represent

what occurs only internal to a system (a and c above) though actions that

attempt control of the environment are also demonstrable (b above).

2.

The

second class of interactions are those in which an individual or system engages

another in dialog, and where each has a say in the outcome. Some examples

a.

when a corporation negotiates

with another to arrange a contract

b.

where

the corporation's advertising campaign is engaging in dialog with customers at

one level, and the sales process and product itself do so at others

c.

when

the manager discusses a worker's future and lays out options.

These

interactions are drawn horizontally to show that one side cannot control the

other but must influence by conversation. In the diagrams, specific flows of

information between participants are shown at various levels. Quite often these

interactions represent what occurs across a boundary between two systems (a, b,

and c just above), but further divisions within a given system (say, between

divisions in a large corporation) can also be shown.

The

next two subsections show each of these classes of interaction, vertical and

horizontal, in greater detail.

3.2. Vertical or "it-referenced" Interactions

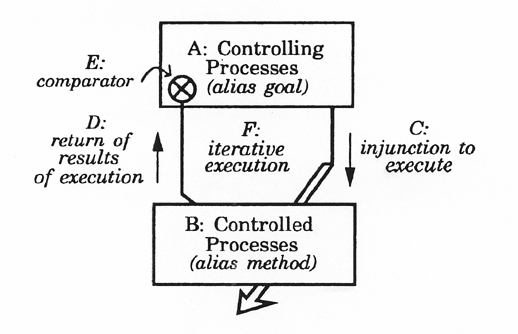

Figure 1

Figure

1 is the basic diagram of control and feedback within two given levels of an

organization, showing "it-referenced" interaction that are drawn in a

vertical dimension. This interaction is called "it-referenced"

because the controlling process treats the controlled process like an object

without choice; like an "it.” This is an “it-referenced interaction

whether the “it” is animate or inanimate.

A:

"Controlling Processes (alias goal)" are, for example, management

policies that are defined at this level but carried out at another. The

distinction of levels is made in the course of the modeling process. The

precise levels are chosen to display the flows of control and feedback that are

of interest.

B:

"Controlled Processes (alias method)" are, for example, the

activities by manufacturing that carry out the goals as indicated (and

dictated) by the level above.

C:

"Injunction to execute" is the actual line of control that causes the

lower level to respond, for example, the memorandum indicating start of a

project or a budget authorization.

D:

"Return of results of execution" is the actual feedback of

information to the higher level, as for example a report indicating results of

specific manufacturing procedures, or an internal survey.

E:

"Comparator" is the specific mechanism whereby the feedback

information is used by comparing the actual result to the desired result, or

original goal.

F:

"Iterative execution" of the entire loop takes into account the

result from the comparator above, to make changes in the various processes,

flows of control and feedback, etc., to make the entire loop more effective.

Closure

occurs when comparator confirms execution of controlled processes is coherent

with controlling processes (as when a goal is achieved by executing a

successful method). If all of the above aspects are present (including

modifications based on feedback and iterative execution, F), the system of

interactions is deemed "intelligent."

It

must be emphasized that the two levels shown are only two of possibly many

vertical levels; applying CT modeling to a system of any complexity leads to

multiple vertical layers in the conversation. Hence a box which appears at a

"lower level" in one interaction, may itself be at the "higher

level" relative to a further box that appears below it.

3.3. Horizontal or "I/you referenced" Interactions

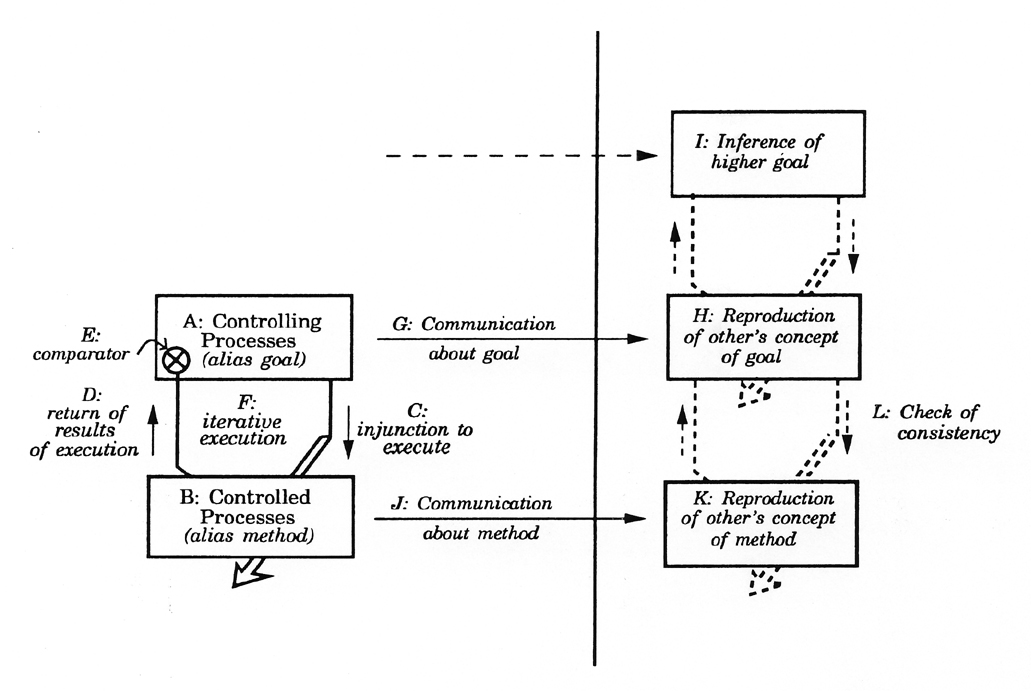

Figure

2 shows a skeleton diagram used to express the horizontal, "I/you

referenced" interactions between two given systems. This interaction is

called "I/you" because there is no controlling or controlled process;

each side is a participant.

(It is important to note that only one direction of a completely symmetric interaction is shown.)

Solid

lines are explicit communications, though they require interpretation by the

recipient and are not perfect or unambiguous or objective. Dashed lines of the

Figure indicate implied or inferred information.

Figure 2

The

elements of the interaction are now presented, though in an arbitrary order as

required by exposition:

G:

"Communication about goal" is, for example, the communication to a

customer that the company's stated "value proposition" is to provide

the products with the best cost/benefit ratio, or durability, for a given

application; or, to an employee, that the company considers the employee to be

an essential asset for its future.

H:

The actual result of the communication is different than what came from the

"sender." ("Sender" and "receiver" are held in

quotations always, to emphasize that the terms are very different from those

used in information theory. However, the terms are universal and evocative and

useful, and are used here with a less restricted and more powerful meaning than

in information theory.) Hence "Reproduction of other's concept of

goal" is a separate thing. Initially this may be taken by the receiver at

face value as true and relatively well understood, though later operations may

modify the situation.

1:

"Inference of higher goal" is the production of a higher goal for

which the previous communication is consistent and affirming. This is as if the

"sender" had actually exchanged something at this level (shown as the

upper, dashed arrow) but in fact nothing has actually been

"transferred" at this level, up to this point. Quite often, the

context or the common experience of the two conversants provides enough for a

higher-level goal to be inferred. However, sometimes the "sender"

creates a false context to encourage an incorrect inference to the

"sender's" advantage, as for example when advertisers imply a food

product is healthy simply because it uses the word "natural", or when

a seller simply states "I have your interests at heart" while not

having demonstrated this to be the case.

J:

"Communication about method" is, for example, the communication to a

customer about the details of a product's capabilities (which should affirm its

stated goals, G); or, an exchange with an employee about the details of working

conditions and health benefits from the corporation (which should show the

method by which that employee is to be considered an asset to the corporation,

relative to the goal as communicated in G).

K:

"Reproduction of other's concept of method", as in H above, is

subject to interpretation and later modification.

L:

"Check of consistency" is a reproduction in the "receiver"

of the entire loop (as per the previously described vertical interactions, see

Section 3.2). This may show the consistency across the upper and lower levels,

and thereby affirm understanding of the "sender's message." Of

course, this can only be (at best) very close and (at worst) may only be a

small fraction of the intended message. Or, the consistency check can expose

the inconsistency between communicated goal and method. The

"receiver" can either make queries back to the "sender"

about intended meanings (not shown in the diagram); or maintain a model of the

perceived inconsistency in the "sender."

It is

ever important to be reminded that references to "goal" or

"method" are relative to any pair of vertical boxes; changing level

by moving up or down the hierarchy changes the attribution of "goal"

or "method" for a given box. These attributions are always relative

to a specific neighbor.

Not

shown for simplicity in the Figure are potential responses, from right to left,

to any given communication. Such iterative exchanges over time constitute

conversation.

4. Checking a Model

It

may require discipline, but the following questions should be asked at all

points in the model (same labeling from Figure 2 as before):

A: (Relative

to a given box interpreted as a "goal") Is the level of organization

well described by the name of the processes in the box at this level?

B: (Relative

to a lower level "method") Is the level of organization well

described by the name of the processes in the box at this level?

C: Does

the upper level really determine (i.e., control, require, cause to occur) the

execution of the processes in the lower level to which it connects? What is the

mechanism that imposes that control (e.g., memorandum, budget approval)?

D: Is

there really a return of information from the lower level to the upper? What is

the mechanism that returns a description to the upper level (e.g., internal

report or survey)?

E: Does

the execution of the "lower" level really achieve the stated goal of

the "upper" level? What is the mechanism for taking the description

returned to the upper level and comparing the result to the stated goal?

F: Does

the entire loop get repeated and are adjustments made for improvement over

time? What is the mechanism for those adjustments being made?

G: Is

there a communication between participants at this level? What form does it

take (memo, verbal statement, legal contract, TV advertisement ... )?

H: Is

a (sufficiently) correct message received?

1: Is

the inferred higher goal consistent with the "sender's"?

J: Is

there a communication at this level? What form does it take?

K: Is

a (sufficiently) correct message received?

L: Is

there consistency (closure) between the communicated levels?

If

any of the above questions A through F are answered in the negative or a

mechanism is missing, the system is not intelligent and is subject to

pathologies. If any of the questions G through K are answered in the negative,

miscommunication has or is likely to take place. If there is no closure in L,

either accidental misunderstanding or intentional miscommunication has

occurred. (Of course it is possible, but less likely, that a closure in item L

is inferred by the "receiver" which is not the communication of the

intended message from the "sender." This can too be modeled, but only

by providing an additional dimension: the content of the messages themselves.)

-end-

|